Earlier today, we announced that Walter AI is joining Legora. We’re incredibly excited about the partnership and have already started working together on building the next generation of legal technology for our customers. Both our teams share the same vision of the future: AI performing end-to-end legal work.

2026 is the year that vision becomes reality.

We are at the beginning of the most significant transformation legal work has ever seen. Similar to the arrival of the internet, which reshaped how people connected and how virtually every industry operated, agentic AI will fundamentally reshape how legal work gets done.

Below we break down what that transformation actually means, how the technology has finally caught up with our vision, and what it takes to build it in a way that legal teams can trust.

We’ve seen this before

To understand where legal AI is going, look at what just happened in software development.

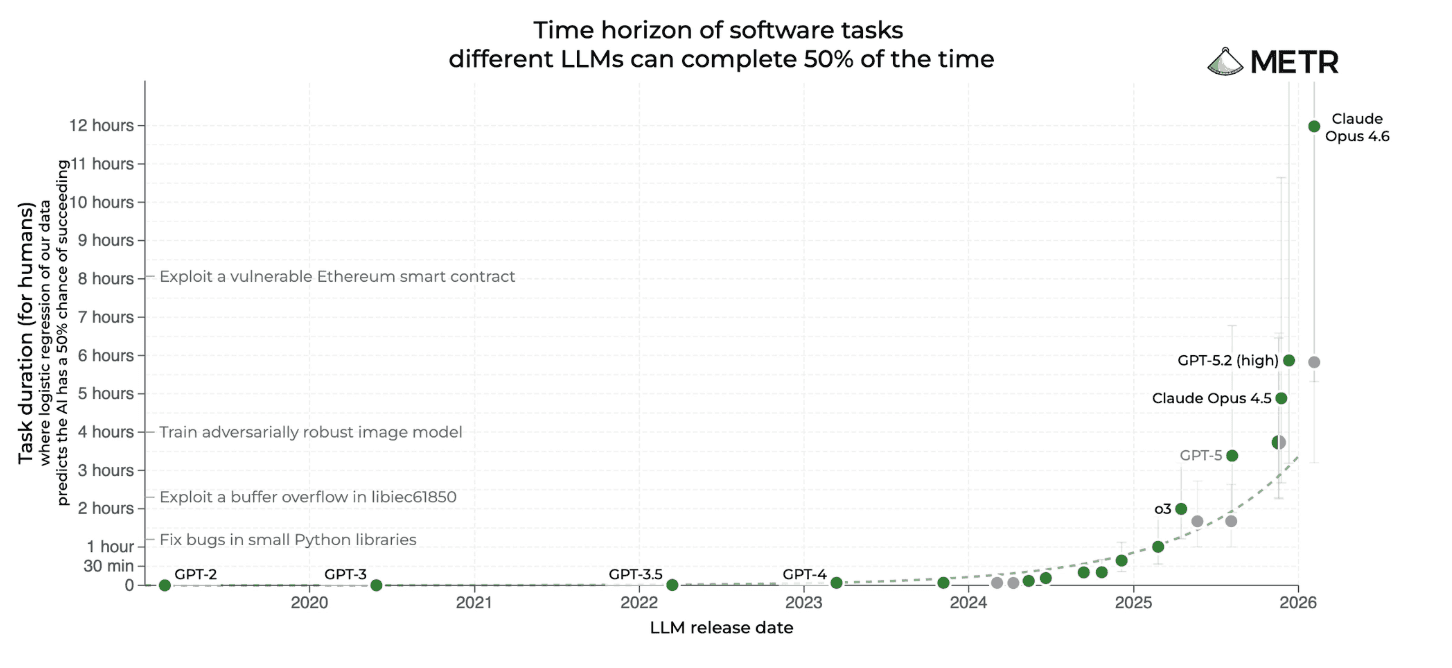

In 2021, GitHub Copilot could autocomplete a line of code. By 2023, Cursor was editing entire files. By 2025, Claude Code was completing multi-hour tasks autonomously. Today, AI agents in software are running multi-day projects spanning millions of lines of code across thousands of files, and largely getting it right.

Although the progression is gradual, there are inflection points where real-world capability sees a step-change. Anthropic's release of Claude Opus 4.5 was one of them, and perhaps the most significant since the launch of ChatGPT. AI can now more reliably complete software engineering tasks that are more complex, more demanding, and would take a human 12 hours to complete, and it’s continuing to improve. (source)

Why legal is the next frontier for agents

Legal work shares the same structural DNA as software. The traits that make agents so powerful in one domain transfer directly to the other.

Both are dominated by text where precision determines outcomes. Both rely on reusable templates and established patterns—code libraries and clause libraries aren't so different. Both require version control, constant iteration, and collaboration across teams. Both have near zero tolerance for errors, where small mistakes carry outsized consequences. And both require agents to reason across large, complex bodies of context: a codebase or a full matter history.

Legal is tracking the same curve as software development, one cycle behind. In 2023, legal AI meant simple prompts and basic document Q&A. By 2025, it meant advanced multi-step workflows. In 2026, it means agents completing complex, end-to-end legal work — autonomously, in context, with human oversight built in.

But the deeper point is this: software was never the destination. It was the proof of concept. Almost every workflow in the information work category shares the same underlying structure:

READ (ingest unstructured information)

THINK (apply domain knowledge)

WRITE (produce structured output)

VERIFY (check against standards) (source)

This sequence describes an associate working through a contract review just as accurately as it describes an engineer refactoring a codebase. Legal work has always fit this model. What's changed is that the technology can now execute at a high level of quality and reliability.

What agentic really means, and why it matters

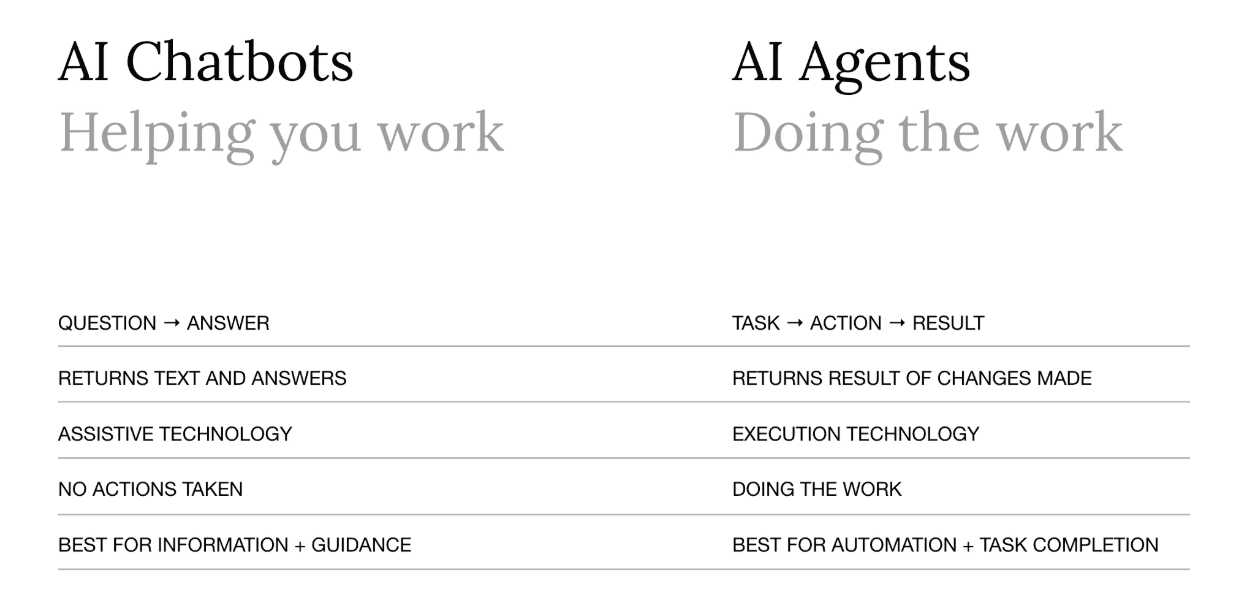

Most legal AI tools today are chatbots. That's not a criticism—chatbots have delivered real value. But it's important to be precise about what they are and what they aren't.

A chatbot receives a question and provides an answer. It's assistive technology. It helps you work.

An agent receives a task, creates a plan of action, executes the plan, and returns a result. It's execution technology. It does the work, end-to-end.

This distinction matters more than it might seem. Some vendors market their tools as "agentic" today, but the truth is they are better described as chained workflows—a fixed sequence of steps defined in advance, where each prompt feeds into the next.

That's automation. It's useful, but it isn't agency.

What makes it agentic is the agent loop: a continuous cycle of reasoning, action, and evaluation that runs until a task is complete. Rather than following a predetermined path, the agent assesses what it knows, decides what to do next, executes it, evaluates the result, and iterates — pulling in the right skills and context as the work demands. You don't define every step in advance. The agent figures out what's needed and adapts as it goes. That's the difference between a tool that follows instructions and one that completes work.

But it doesn’t stop there. Three categories of legal work become possible at this capability level:

Long-context: agents can read and work across an entire matter file at once, not just a single document, unlocking exhaustive review at a scale no human team can match.

End-to-end: agents can complete multi-step legal tasks from start to finish using a variety of tools. From legal due diligence, to complex drafting, to long-running workflows using research, web search, file management, DMS, and drafting tools—while checking in with humans at key decision points along the way.

Memory-driven: agents that remember prior positions, preferred drafting styles, and client preferences across sessions, getting smarter and more tailored the more they're used.

In practice, this means agents don't just review clauses—they redline the document. They don't just suggest research angles—they compose and execute queries, synthesize results, and incorporate findings. They don't just identify issues in a data room—they organize it, review it exhaustively and produce the diligence report.

This is not incremental. It is a different category of tool.

Models aren’t enough

The frontier models powering today's AI are advancing at an incredible pace. But a powerful model and a purpose-built legal platform are two different things—and the gap between the two is where the real risks live. If deployed without legal-specific knowledge, data sources, context, governance, or oversight, even the best models can’t meet the demands of real legal work.

Legora is purpose-built for legal work. What that looks like in practice:

Full matter context — every agent action is grounded in the client and matter context, the applicable playbooks, and the firm's knowledge and standards

Review and approval flows — humans stay in the loop at the points that matter, with structured checkpoints built into every workflow

Complete audit trails — every action an agent takes is traceable, reviewable, and defensible

Enterprise governance — standardized workflows that can be deployed, managed, and controlled across an entire organization, balancing performance and risk management.

Legal-specific tools — purpose-built tooling for how legal work actually gets done, from tabular review, to DMS integrations, to redlining to research

This is what separates a legal platform from a frontier model with a legal plug-in. And it's what makes agentic workflows safe to deploy at scale inside a law firm.

Pulling the future forward

Legora and Walter AI were founded on opposite sides of the world, with the same vision of the future: AI performing end-to-end legal work. The recent advances in agentic technology have made that vision a reality. Now we're building it together, faster.

If you're a legal team thinking about what the future looks like, we'd love to show you what we've been building.